What is an AI memory system?

It is infrastructure that lets agents retrieve facts, artifacts, decisions, and evidence beyond the current prompt or session.

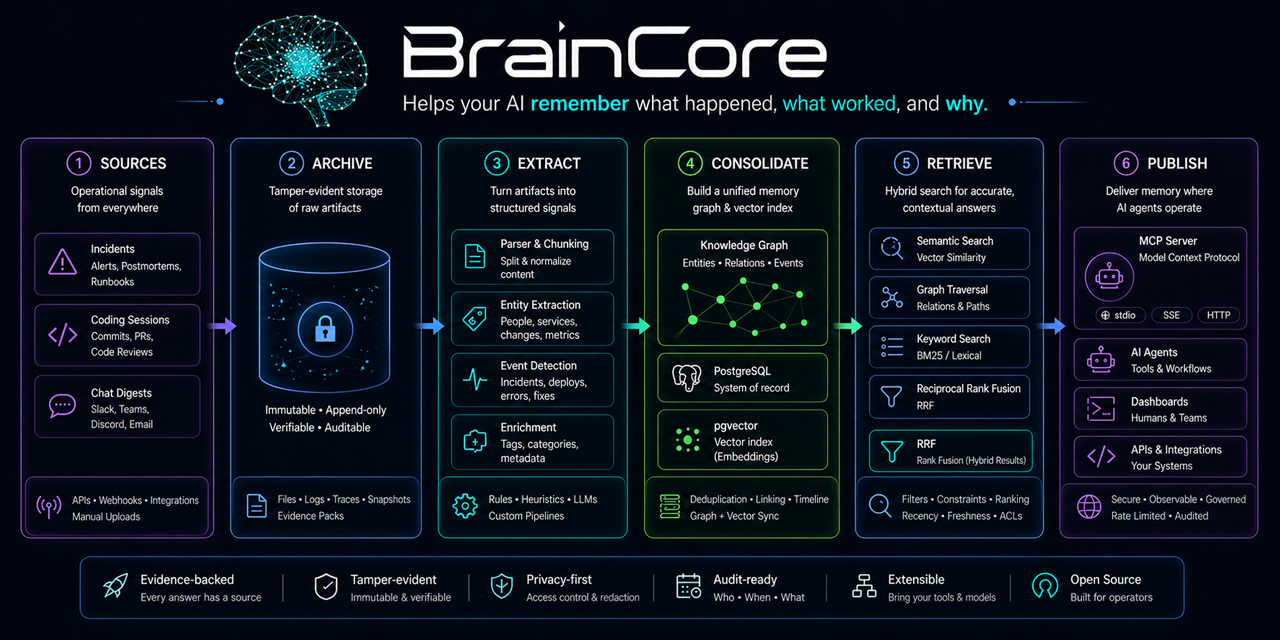

Durable agent memory is not a longer prompt. A serious AI memory system stores artifacts, extracts facts, tracks provenance, records time, and retrieves context through inspectable infrastructure rather than relying on conversation history alone.

Agents need memory because context windows are temporary and session summaries are lossy. The useful memory layer records source artifacts first, extracts evidence-linked facts, preserves temporal state, and gives agents a way to ask what changed, what fixed a problem, and which facts are trusted.

| Memory Type | Typical Contents | Limitation |

|---|---|---|

| Chat memory | User preferences, conversation summaries, recurring instructions. | Useful for personalization, but weak for operational proof and incident continuity. |

| Vector RAG | Document chunks embedded for semantic retrieval. | Good for recall, but often weak on time, trust status, and source-to-fact provenance. |

| Graph memory | Entities and relationships across documents or events. | Powerful when curated, but can become hard to inspect if facts are not evidence-linked. |

| Operational memory | Artifacts, incidents, facts, episodes, evidence segments, playbooks, and verification state. | Requires disciplined ingestion and trust classes, but is better suited to long-running agent work. |

BrainCore's public positioning is evidence-grounded operational memory. It preserves source artifacts, extracts facts with provenance, applies trust classes, and retrieves through SQL, full-text search, vector similarity, temporal expansion, and optional graph-path retrieval.

Store the source artifact first so extracted memory can be traced back to evidence.

A fact can be searchable before it is promoted as trusted public knowledge.

Agents need to know when something was true, stale, superseded, or last verified.

Retrieval latency matters because memory must fit inside agent work loops. But memory quality also depends on evidence coverage, trust status, temporal validity, and whether the retrieved context answers the current operational question. BrainCore's public production-corpus artifacts report 26,966 facts, 71.6 ms P50, 85.2 ms P95 with vector stream disabled, and 98.52% fact-evidence coverage. Those are useful proof points, but they should not be stretched into broad "best" or "SOTA" claims.

A practical AI memory system has to solve four jobs at once: preserve raw evidence, extract structured facts, retrieve relevant context, and prevent stale or unsupported knowledge from being promoted as truth. That is why operational memory cannot be treated as a simple embedding store.

The result is not just longer context. It is a system of record for what the agent is allowed to remember, why it believes that memory, and whether a human or process has promoted that memory into trusted operational knowledge.

It is infrastructure that lets agents retrieve facts, artifacts, decisions, and evidence beyond the current prompt or session.

RAG usually retrieves document chunks. Operational memory also tracks artifacts, extracted facts, time, trust status, and provenance.

PostgreSQL provides inspectable structured data, constraints, SQL, and full-text search; pgvector adds semantic retrieval in the same system.

They can ask what changed, what fixed an issue, what evidence supports a claim, and which facts are trusted or stale.

Use the operational memory guide for incident, coding-session, and infrastructure continuity patterns.